MLSEC 2022 – The Winners and Some Closing Comments

And the winners of the MLSEC 2022 challenge are

Facial Recognition First place – Alex Meinke

Facial Recognition Second place – Zhe Zhao

Phishing First Place – Biagio Montaruli

Phishing Second place – Tobia Righi

Other write-ups: Facial Recognition – Niklas Bunzel and Lukas Graner

We are really grateful to everyone who participated in this challenge, and special thanks to everyone who provided a write-up. And congrats to the winners!

This year was the 4th MLSEC challenge. If you are interested in the previous ones:

From a technical point of view, the main changes this year were:

- We dropped the malware binary offensive and defensive track. Do not worry, they might come back in the future.

- We kept the phishing offensive track but made it harder.

- We added the face recognition track.

- “Unlimited” API calls

Phishing Advances

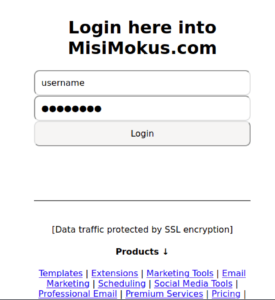

The goal of the phishing track was to modify the phishing HTML pages in a way that the page would be rendered exactly the same, but it would no longer be detected as malicious by the ML models.

Last year, we only trained the phishing detection ML models on static HTML files. From the beginning, it was clear that encoding the HTML and decoding it on the fly might be a valid attack vector, and it was!

This year, to counter this we also interpreted the HTML, extracted the Document Object Model (DOM), and trained some of the models on the DOM itself. By using this trick, the previous attempts were not working anymore.

Facial Recognition Challenge

For this track, Adversa.ai prepared ten images containing celebrity faces. The goal was to modify the original images in a way that the new image would be recognized as the other celebrities by the ML model. This meant modifying ten images for nine other celebrities, a total of 90 images.

Two scores were displayed to the users, the “confidence” and the “stealthiness”. Confidence showed the probability of an adversarial image to be classified to a target class. Stealthiness showed the structural similarity of an adversarial image to the source image. This year, only the confidence score went into the scoreboard, and stealthiness was used for informational purposes only.

As the winner already noted, there is room for improvement for next year’s facial recognition challenge, like using the stealthiness score more.

General Improvements

This year, we spent a significant amount of time upgrading the infrastructure, and instead of running it directly on the C64 Linux server, we moved the environment to Docker. In the beginning, I was pretty excited about the gains we could have through this improvement. Unfortunately, the migration consumed more energy than I expected. Fun exercises like renewing Let’s Encrypt certificates became a lot more complicated than they used to be. But it clearly paid off, since now the dev environment on macOS and the dev environment on Linux could be almost the same, and using commands like

docker-compose -f docker-compose.yml -f docker-compose.dev.yml upreally helped keep things nice and tidy. In the dev YAML, I could now override the parameters which are different on the PROD, and all is good. I know this is not news to people living in the Docker world, but I learned these things along the way. Luckily, this year I had the helping hand of Marton Bak, and I did not have to worry about the server going down while I was on an intercontinental flight.

API Stats

After careful evaluation and testing </sarcasm> we decided to drop any limitations on the API calls. There were no rate limits, and the number of API calls was not counted in the final results. As the famous quote states, “60% percent of the time it works every time“… this kinda affected the stability of our systems. We still have to dig deeper into what exactly went wrong, but the good old “have you tried to turn it off and on again” was the easiest solution during the competition. Sorry for that. On the other hand, this year our infra handled orders of magnitude higher number of requests than previously. We had a total of 891,640 Facial Recognition API post requests (and this resulted in 1,643,441 backend requests) and 78,214 phishing API post requests.

Interestingly, the phishing track winner did not use the most queries. For the Facial Recognition track, the winner used the most queries. Conclusions? None. Just an interesting fact.