MLSEC 2021 Anti-phishing Evasion Track: Task, Results, and Winning Solutions

In this year’s MLSEC competition, the Attacker Challenge included a new anti-phishing evasion track. The phishing detector was provided by CUJO AI, and it contained an HTML-based machine learning (ML) model. Participants had to modify malicious phishing websites to evade the phishing detector in a way that still preserved their rendering. The new track encouraged broader participation in MLSEC, as it is easier to modify HTML than malicious binaries, which makes the entrance barrier lower.

Setting up the Task

As the organizers, we wanted to prepare a task that leads to an exciting competition and also brings value for the industry. First, we thought that providing a diverse set of phishing websites would do the job, but it quickly became apparent that this was not enough.

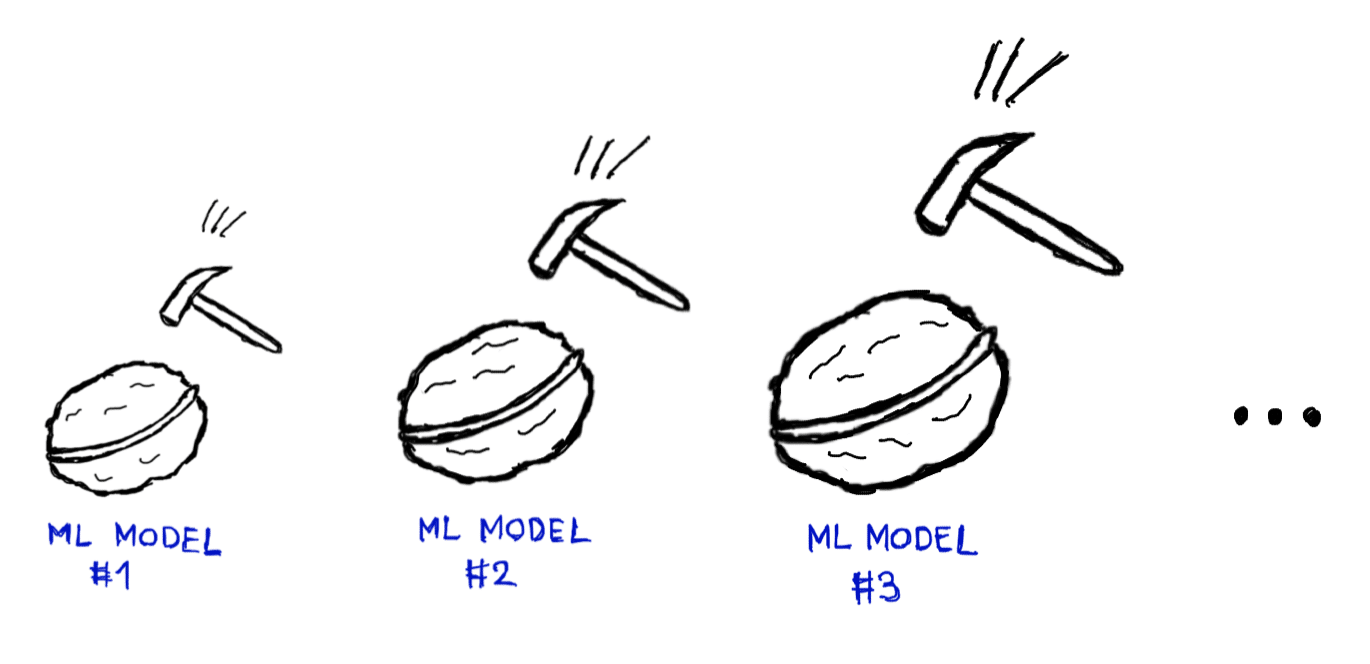

In this task, humans try to fool the machine with tricks. It is natural to expect that contestants will come up with ideas that are not specific to a given website (i.e. the input). It is likely that a trick will either not beat the machine with any inputs, or it will beat it with them all. Such an all-or-nothing setup does not lead to a good competition. To have better control over the difficulty of task, we decided to introduce a series of ML models that are increasingly harder to crack.

Model #1 received all input features without any processing. Model #2 received a slightly processed input vector. For a selected set of features, we restricted the value to a given interval, meaning that the contestants could not fool the machine by setting these features to extreme values. For the subsequent ML model, we applied the same trimming scheme, involving a gradually increasing set of trimmed features. In theory, we could have provided inherently different ML models, but feature trimming seemed a simpler and better approach to control the difficulty of the task.

As inputs, we prepared artificial phishing websites. Each website was provided as an HTML file. All of them used internal resources only. CSS and JavaScript libraries were inlined, images were included as Base64 encoded blobs. We tried to make the input set diverse, although the depth of the task did not come from the diversity of the inputs, but from the increasingly harder-to-evade models.

In total, we provided 10 phishing websites, and 7 ML models. The source code of the websites had to be modified so that their rendering remained the same (according to a headless Chrome browser), but the ML models considered the websites as legitimate. Legitimate means that the probability output of the ML model went below 0.1. Evading a single ML model with a single input was worth 1 point, so the maximum achievable score was 7 × 10 = 70 points. In case of a tie, the team with the lower number of API calls was ranked higher (submitting a full solution required 70 API calls).

Internal testing showed that the maximum score was achievable, although not easily – a few days of intense effort was needed. We were absolutely excited to see how the contestants will perform the task.

Anti-phishing Competition Results

The Anti-Phising Evasion track of MLSEC 2021 started on Aug 06 and ended on Sept 17. There were 9 participants in total. The final leaderboard was the following:

| Rank | Nickname | Score | # API Calls |

| #1 | npttlpwx | 70 | 320 |

| #2 | qjykdxju | 70 | 343 |

| #3 | pip | 70 | 608 |

| #4 | mlsmgfm | 70 | 9982 |

| #5 | vftuemab | 48 | 301 |

| #6 | zwcreatephoton | 40 | 1113 |

| #7 | amsqr | 36 | 129 |

| #8 | qqtenell | 4 | 98 |

| #9 | nqiarajv | 3 | 110222 |

Four teams were able to finish with 70 points, the maximum score. Our internal testing used about 5000 API calls to achieve the maximum score, when the testers had some knowledge about the internals of the system. Therefore, the 320, 343 and 608 API calls used by the first three teams are exceptionally good results.

The 3rd Place Solution

The man behind the third place winner “pip” is Ryan Reeves (USA). He describes his solution in this excellent write-up. His strategy was to:

1. Devise a high fidelity method utilizing JavaScript to recreate the phishing page.

2. Find a benign HTML template which would bypass all 7 anti-phishing models.

3. Incrementally combine the malicious JavaScript with the benign HTML template.

As for recreating the phishing page, he first tried to inject the original HTML source into an iframe (or other) element as a Base64 blob. This approach did not work, because iframe rendering slightly differed from normal rendering, and therefore the solution got rejected because of a screenshot hash change. Then, he decided to use the document.write() method to inject the Base64 encoded source of the original website, as it keeps the rendering intact.

To find a benign HTML template that bypasses all 7 anti-phishing models, he iterated over the Majestic Million list of top sites to see if any of these sites bypasses all models. Not too far down the list, the Adobe website homepage somehow had the special sauce that bypassed all 7 of the anti-phishing modes.

Finally, the benign template and the malicious JavaScript had to be combined so that everything still worked. Based on experimentation, the benign template was put into a head element that was later removed by JavaScript. The place of the malicious Base64 payload was the class attribute of a (later removed) div element.

The Winning Solution

The entity behind “npttlpwx” was Team Kaspersky (RU), led by Vladislav Tushkanov. They explained their approach here, in a high-quality and entertaining manner. As they started working on the competition, they noted that the leader had already achieved the highest possible score by using just 343 API calls. Therefore, they needed to use their API calls sparingly, which ruled out standard attacking methods. Their final solution was ingenious and stylish: They Base64-encoded the original phishing site and mixed the data blob with an excerpt from the Bible:

PGhlYWQ+PG1ldGEgY2hhcnNldD11dGYtOD48bWV0 1:2 And the earth was without form, and void; and darkness was upon YSBjb250ZW50PSJ3aWR0aD1kZXZpY2Utd2lkdGgs the face of the deep. And the Spirit of God moved upon the face of the IGluaXRpYWwtc2NhbGU9MS4wIiBuYW1lPXZpZXdw waters. B3J0PjxtZXRhIGNvbnRlbnQ9IlNpdGUgRGVzaWdu ...

Then, they extracted the Base64 part of the payload and used document.write() to recreate the original HTML. The role of the Bible excerpt was to make the character distribution of the input more natural. Using this approach, they were able to fool all 7 models with all 10 inputs, using only 320 API calls.

Comparing to Our Solution

It was interesting to see how the published solutions compare to our internal approach. We also used Base64 encoding and document.write() to recreate the original phishing content. However, we encoded only the body part of the phishing websites. In order to fool the ML models, we took snippets from popular websites and inserted them into the head or hidden div elements. We could customize the number of times the payload would be inserted into each snippet. Although the main ideas are common, we must admit that the top solutions are more universal and elegant than ours.

Summary

We would like to congratulate the winners and thank every participant for their efforts. We are glad that MLSEC’s new anti-phishing evasion track caught the attention of talented people. For us, the best part was to read the solution write-ups and see your diverse sets of ingenious ideas. We thought that the task would be on the difficult side, but it turned out that human creativity easily crushed machine intelligence. Nevertheless, AI will try to strike back in next year’s competition. 😉