Call for Contestants to Compete in the Annual Machine Learning Security Evasion Competition

The annual machine learning (ML) security evasion competition invites ML practitioners and security researchers to compete against their peers.

The Machine Learning Security Evasion Competition 2022 (MLSEC 2022) is a collaboration between Zoltan Balazs (Head of Vulnerability Research Lab at CUJO AI), Hyrum Anderson (Distinguished Engineer at Robust Intelligence), and Eugene Neelou (Co-Founder and CTO at Adversa AI) to allow researchers to exercise their attacker muscles against ML security models in a unique real-world setting. The aim of the contest is to raise awareness of the various ways in which threat actors can evade ML systems. “Watching the evasions evolve during the competition over the years is very exciting for us,” admits Balazs. “We’re really excited about creating this opportunity to get researchers and cybersecurity professionals together to exercise their skills in a unique real-world setting.”

This year, the competition is organized around two separate tracks of the attacker challenge:

1. An anti-phishing model, prepared by researchers from CUJO AI; and

2. A face recognition challenge, prepared by AI Red Team at Adversa AI.

Additionally, the participants will have the chance to leverage open source tools, including Microsoft’s Counterfit, an open-source tool for assessing ML model security. “Tools like Counterfit have made it possible for almost anyone to begin attacking machine learning systems. There is actually very little need for one to understand ML models or the math behind them to begin to find ways to evade them. More important is the curiosity and tenacity that will guide you through the necessary learning steps. Microsoft’s Counterfit does this – it wraps an excellent set of open source tools and presents a common interface to a user. Contestants who wish to use the tool should be able to start almost from ‘zero’, and end with a solution that seamlessly leverages some of the most exciting contributions to adversarial machine learning in the last five years.”

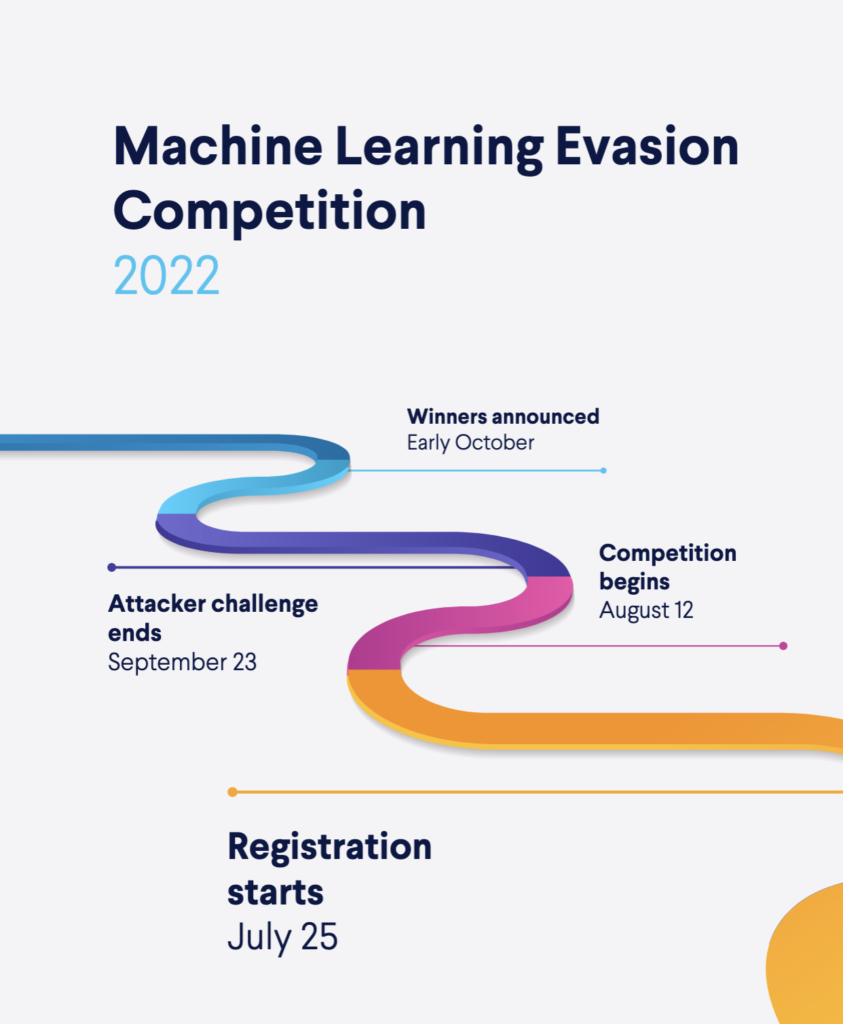

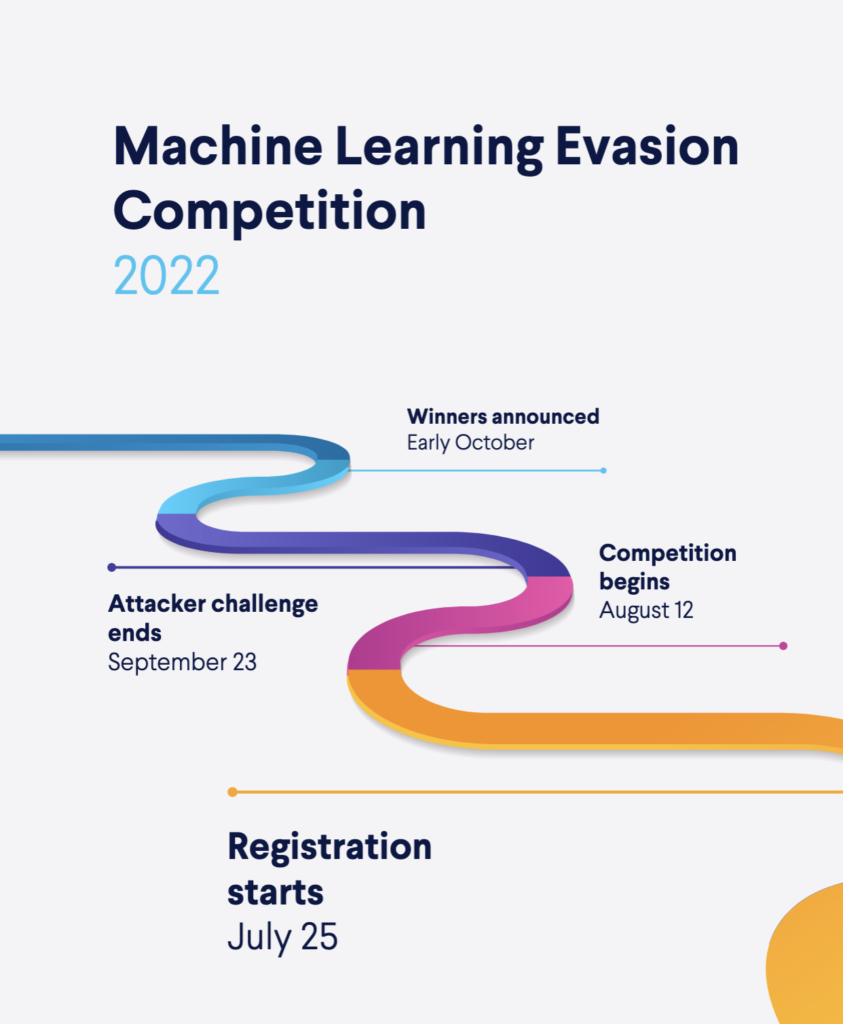

The Timeline of the Machine Learning Security Evasion Competition 2022

Anti-Phishing Evasion Track: Trespassing the Phishing Detection ML Models

For the second year in a row, the competition will include the Anti-Phishing Evasion track. The anti-phishing model provided by CUJO AI consists of an HTML-based phishing website detector. Contestants will modify malicious phishing websites to preserve the rendering but evade the phishing ML model. First introduced to encourage broader participation, the track maintains its relevance as new platforms emerge. “Such platforms often do not experience the full extent of malicious abuse, making them a fertile ground for phishing attacks and fraud actors,” warns Dorka Palotay, Senior Threat Researcher at CUJO AI.

The phishing challenge will be carried out using a custom, purpose-built ML model, created specifically for this competition. “It is relatively easier to modify HTML, therefore this challenge is set to attract researchers and practitioners of all levels to participate in this competition,” says Balazs.

New This Year: Introducing Facial Recognition Evasion Track

New this year, the Attacker Challenge will include a Face Recognition Evasion track, provided by Adversa AI. Facial recognition is used to identify human faces using unique mathematical and dynamic patterns that make this system one of the safest and most effective ones.

However, as with any technology, there are potential drawbacks to using facial recognition, such as threats to privacy, violations of rights and personal freedoms, potential data theft, and other crimes. The new track has been added in reaction to the rapid adoption of biometrics and surveillance and the potential security threats it brings.

“Biometric AI-based facial applications are among the most vulnerable, yet most widely deployed – as shown by Adversa’s unique knowledge base of all existing attack methods across AI-driven industries – together with Automotive, Finance, and Internet,” says Neelou. “Our cyber-physical AI Red Team at Adversa AI has never seen a non-vulnerable ML model, API service, or smart physical device for facial recognition – and it’s terrifying.”

During the Facial Recognition Evasion track, the contestants will get a dataset of facial imagery to modify so that a person would be recognized as another one by the model. For each face image that fools the model, contestants will receive points if the modified face looks identical to the original face. The highest-scoring contestant wins.

Register to the MLSEC 2022

To participate in the competition, individuals or teams may register at https://mlsec.io. Registration opens on the 25th of July. We will kick off the competition at DEF CON AI Village, with the competition running from the 12th of August through the 23rd of September. Prizes will be awarded to the winners of each track after the announcement in early October. To compete, attendees must publish their detection or evasion strategies. The winners will be announced at the beginning of October.

Find out more about the previous Machine Learning Security Evasion Competition 2021 here.

About CUJO AI

CUJO AI greatly elevates Internet Service Providers’ ability to understand, serve & protect their customers with advanced cybersecurity and granular network & device intelligence. Deployed in tens of millions of homes and covering almost 2 billion connected devices, CUJO AI’s advanced AI algorithms help clients uncover previously unavailable insights to raise the bar on customer experience & retention with new value propositions and superior operational services. Fully compliant with all privacy regulations, CUJO AI services are trusted by the largest broadband operators around the world, including Comcast and Charter Communications.

About Adversa AI

Adversa AI is an innovative Israeli AI Startup striving to increase trust and Security in AI systems. The company is focused on protecting humanity from the cyber pandemic, which seems inevitable in the era of artificial intelligence with its security threats, privacy issues, and real safety incidents.

The team consists of world-class security experts, mathematicians, AI researchers, and neuroscientists who work together to shape the AI industry, explore AI systems to make them secure, safe, trustworthy, and reliable.

Adversa AI is acknowledged several times as an award-winning vendor with patent-pending technology providing solutions for mission-critical AI-driven industries such as but not limited to smart home, biometrics, surveillance, financial, Internet and automotive.

About Robust Intelligence

Robust Intelligence is an end-to-end machine learning integrity solution that proactively eliminates failure at every stage of the ML lifecycle. From pre-deployment vulnerability detection and remediation to post-deployment monitoring and protection, Robust Intelligence gives organizations the confidence to scale models in production across a variety of use cases and modalities. This enables teams to harden their models and stop bad data from entering AI systems, including adversarial attacks.